Modeling Kata here again! Models are useful to describe things, systems, knowledge — basically any information you want to organize and formalize will gain by using a solid formalism like Ecore.

Structuring and describing is nice, but then most of the time you need to evaluate your design. One choice is using the validation tools so that any “error in your design” is shown to you and you can fix it. The drawback of validation is that you can’t easily get the big picture of your design quality corresponding to the constraints you defined.

Who can say that these bees invading my garden are organized in a nice or poor way? That’s definitely not a binary information.

Another approach is designing your models with tooling updating or self-constructing other parts to give you information about its quality. Let’s take a (quite naive but still interesting) example:

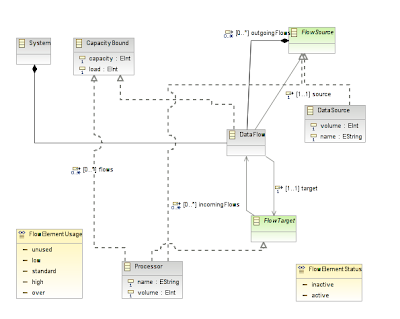

I defined a formalism for a “flow-like” language. You can use it to describe DataSources and Processors linked by DataFlows. Processors and DataFlows are capacity-bounded, which means they’ve got a maximum capacity and under given load will be idling or overused.

Here is a class diagram displaying the simplest parts of the flow.ecore:

Here I’m mixing both the information I’ll describe (a given system with datasources, processors and flows) and the feedback about my design (the flow element usage). Note that every element here might be activated or not (see the FlowElementStatus enumeration).

Now, to define my rules updating each value considering the overall model, I basically have the choice either to implement that in Java, or use a Rules Engine. Implementing in Java might look like a good idea but you quickly end up rebuilding an inference engine. Using Drools lets you focus on the rules. From there, the model can be updated live to give feedback on the design quality.